VAI: Voyage AI CLI & RAG Playground

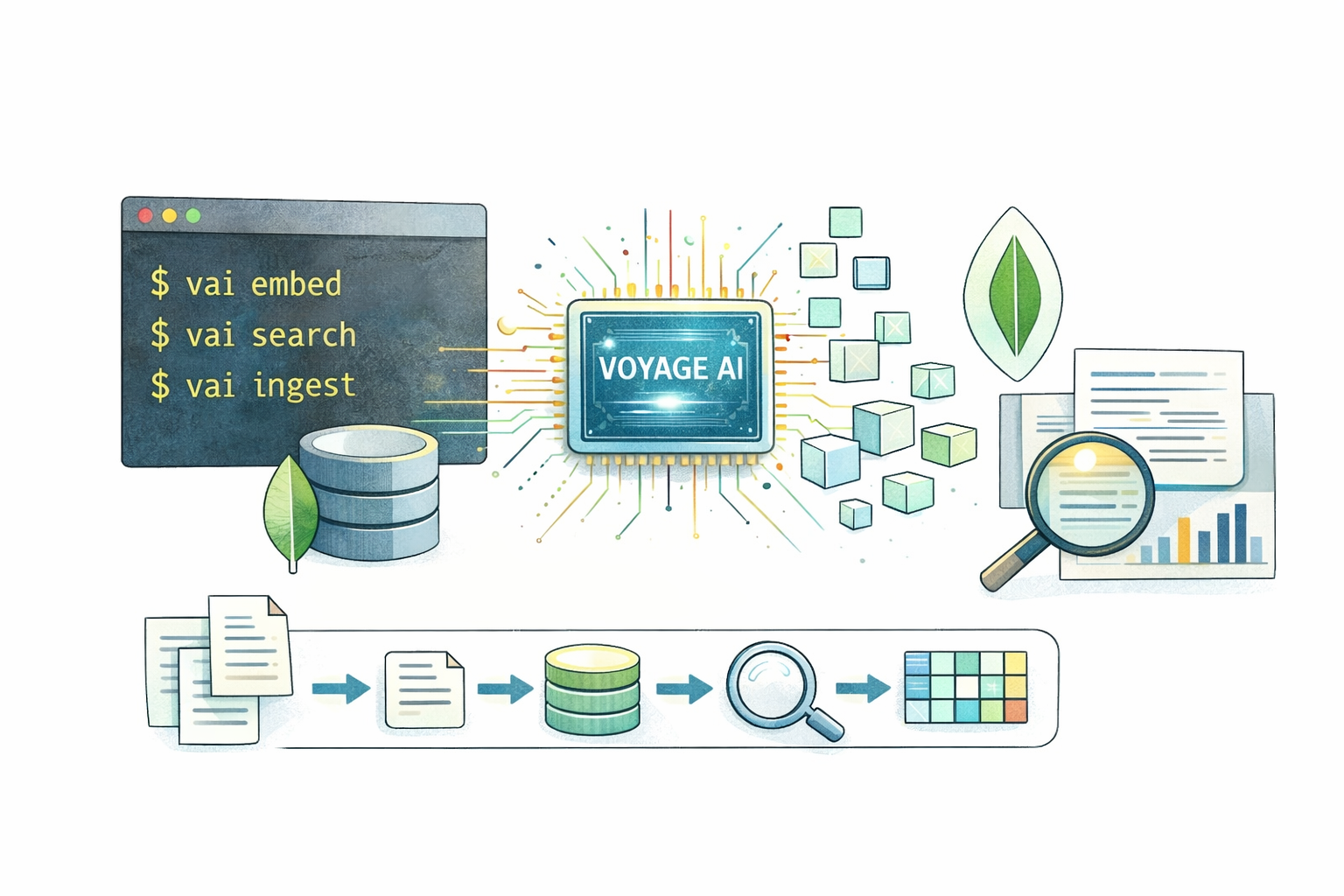

A developer toolkit for building semantic search and RAG workflows with Voyage AI embeddings, MongoDB Atlas Vector Search, local inference, and MCP integration.

By Michael Lynn • 2/19/2026

VAI: Voyage AI CLI & RAG Playground

Command:

vai · Repository: github.com/mrlynn/voyageai-cliVAI (

voyageai-cli) is a developer toolkit for building semantic search and RAG workflows with Voyage AI embeddings and MongoDB Atlas Vector Search. It covers the full pipeline from chunking documents and generating embeddings to storing vectors, searching, reranking, evaluating, and integrating those capabilities into editors and applications.What started as a CLI for hands-on vector search experiments has grown into a much broader platform: local inference with

voyage-4-nano, browser-based tooling, composable JSON workflows, conversational RAG, benchmarking, evaluation, and an MCP server for AI-native development environments.Overview

I built VAI to make semantic search approachable without forcing developers to stitch together one-off scripts for every stage of the pipeline. The goal was to give people a single tool they could use to learn the concepts, prototype locally, and then scale toward production patterns using the same mental model.

At a high level, VAI helps developers move through a familiar sequence:

- Chunk raw documents into embedding-friendly units

- Generate embeddings locally or through the Voyage AI API

- Store vectors in MongoDB Atlas

- Search and rerank results

- Evaluate quality, benchmark tradeoffs, and integrate the workflow into apps or AI tools

Key Features

1. End-to-End RAG Pipeline

The core workflow is still the fastest path from documents to a searchable vector collection:

bash code-highlightvai pipeline ./docs/ --db myapp --collection knowledge --create-index

- Reads files recursively (

.txt,.md,.html,.json,.jsonl,.pdf) - Chunks with a configurable strategy (fixed, sentence, paragraph, recursive, markdown)

- Generates embeddings with Voyage AI or local nano inference

- Writes to MongoDB Atlas with metadata

- Can create the Atlas Vector Search index automatically

2. Local Inference with voyage-4-nano

One of the biggest recent additions is a zero-API-key on-ramp using local inference:

bash code-highlightnpm install -g voyageai-cli

vai nano setup

vai embed "What is vector search?" --local

This uses a lightweight Python bridge under the hood so developers can run

voyage-4-nano on their own machine. The important product decision here is that local mode fits into the same workflow as hosted Voyage 4 models, so developers can start locally and scale later without relearning the tool.3. Flexible Chunking

| Strategy | Description |

|---|---|

| fixed | Fixed-size chunks |

| sentence | Sentence boundaries |

| paragraph | Paragraph boundaries |

| recursive | Recursive splitting (default) |

| markdown | Heading-aware markdown chunking |

Configurable chunk size, overlap, and output formats (JSONL, JSON, stdout). Markdown files can automatically use the markdown strategy.

4. Search, Query, and Rerank

VAI supports both raw vector similarity search and two-stage retrieval:

bash code-highlightvai query "authentication guide" --db myapp --collection docs

That flow looks like:

- Embed the query

- Run MongoDB Atlas

$vectorSearch - Rerank candidates with Voyage reranking models

- Return better ordered results with scores and filtering support

This makes it practical to compare retrieval quality with and without reranking while using the same data pipeline.

5. Web Playground

The local playground adds a visual interface on top of the CLI:

bash code-highlightvai playground

The current playground includes seven interactive tabs:

- Embed

- Similarity

- Rerank

- Search

- Models

- Chat

- Explain

It is designed for teaching, experimentation, and quick validation of search and embedding behavior without leaving the browser.

6. Conversational RAG and AI Tooling

VAI now extends beyond classic CLI workflows:

vai chatadds conversational RAG with Anthropic, OpenAI, or Ollamavai mcpexposes VAI as an MCP server for AI-powered editorsvai mcp installwires those tools into Claude Desktop, Cursor, Windsurf, and VS Code

That MCP support is a meaningful shift in scope. VAI is no longer just a terminal utility; it can now act as infrastructure for AI-assisted coding and knowledge retrieval inside developer tools.

7. Workflows

VAI workflows let developers define multi-step RAG pipelines as JSON:

bash code-highlightvai workflow list

vai workflow init -o my-pipeline.json

vai workflow validate my-pipeline.json

vai workflow run my-pipeline.json --input query="How does auth work?"

These workflows support dependencies, template expressions, and automatic parallelization of independent steps, which makes them a strong bridge between one-off experiments and repeatable production processes.

8. Evaluation, Benchmarking, and Lifecycle Management

VAI also grew into a tool for measuring and maintaining retrieval systems, not just building them:

vai evalmeasures retrieval quality with metrics like MRR, nDCG, Recall, MAP, and Precisionvai benchmarkexplores model, cost, quantization, rerank, batch, and end-to-end tradeoffsvai purgeandvai refreshhelp manage embedding lifecycle as models or source content changevai estimatehelps compare cost strategies before scaling a workload

Technical Implementation

Tech Stack

Core Components

| Path | Purpose |

|---|---|

| src/cli.js | Main CLI entry and command registration |

| src/commands/ | Command modules for setup, ingestion, retrieval, evaluation, MCP, workflows, and tooling |

| src/lib/api.js | Voyage AI API client |

| src/lib/mongo.js | MongoDB Atlas connection and operations |

| src/lib/chunker.js | Five chunking strategies |

| src/lib/catalog.js | Model definitions, pricing, and benchmark metadata |

| src/lib/readers.js | File parsers (.txt, .md, .html, .json, .pdf) |

| Workflow system | JSON-based multi-step pipeline execution |

| MCP server | Editor-integrated search, embedding, and knowledge tools |

Product Surface

The current project surface is much broader than the original post described:

| Area | What it enables |

|---|---|

| CLI | Scriptable local and API-backed RAG workflows |

| Playground | Interactive exploration in the browser |

| Local inference | Zero-key onboarding with voyage-4-nano |

| MCP | AI editor integration for search and retrieval |

| Workflows | Composable JSON pipelines with dependencies |

| Evaluation | Retrieval quality measurement and comparison |

| Code generation | Generated starter code and scaffolds for apps |

MongoDB Integration

- Connection layer: Official MongoDB Node.js driver with Atlas-oriented workflows

- Storage: Embeddings, metadata, and chunked source content

- Retrieval: Atlas Vector Search via

$vectorSearch - Operational support: Index creation, data refresh, purge workflows, and collection tooling

MongoDB is central to VAI's design because it gives developers a practical place to move from experiments into real retrieval systems without changing databases between prototype and production.

Representative Commands

| Command | Purpose |

|---|---|

| init | Initialize .vai.json project config |

| pipeline | End-to-end chunk, embed, store, and index flow |

| nano | Local voyage-4-nano setup and testing |

| query | Two-stage retrieval with reranking |

| chat | Conversational RAG with multiple providers |

| workflow run | Execute JSON-defined pipelines |

| mcp install | Install the MCP server into AI tools |

| eval | Measure retrieval quality |

| benchmark | Compare cost, speed, and quality tradeoffs |

| generate / scaffold | Produce integration code and starter projects |

Why This Project Matters

VAI solves a real gap in the vector search ecosystem: many developers understand the theory of embeddings and RAG, but the path from concept to working system is still fragmented. They need to learn chunking, embeddings, storage, retrieval, reranking, evaluation, and integration, often across multiple tools.

VAI brings those concerns together into one toolkit. It is useful both as a learning environment and as a serious prototyping tool for teams working with semantic search, RAG, and AI-enabled product features.

Challenges & Solutions

Challenge 1: Making advanced retrieval workflows approachable

RAG systems involve a lot of moving parts, and most developer tools only cover a small slice of the lifecycle.

Solution: VAI presents a unified interface across chunking, embedding, storage, search, reranking, evaluation, and integration so developers can keep one mental model as they move from experiment to production.

Challenge 2: Lowering the barrier to first success

Requiring API setup before a developer can even test the basics creates friction.

Solution: Local

voyage-4-nano inference gives VAI a zero-key starting point while preserving compatibility with the broader Voyage 4 family and MongoDB-backed workflows.Challenge 3: Meeting developers where they already work

A tool like this is more useful when it can move beyond the terminal.

Solution: The web playground, JSON workflows, generated starter code, and MCP server make VAI usable in the browser, inside apps, and directly within AI-powered editors.

Results

VAI now serves as:

- A practical CLI for semantic search and RAG prototyping

- A teaching tool for embeddings, reranking, and vector search concepts

- A local-first on-ramp with

voyage-4-nano - A bridge into AI editors through MCP

- A more complete retrieval engineering toolkit with evaluation and workflow support

Notes on Screenshots

I reviewed the screenshots folder referenced for this project, but at the moment it contains the capture script rather than committed image assets. Once the generated screenshots are available, they would make a great addition to this article alongside the playground and MCP sections.

Links

| Resource | Link |

|---|---|

| Repository | github.com/mrlynn/voyageai-cli |

| NPM | npmjs.com/package/voyageai-cli |

| Voyage AI Docs | mongodb.com/docs/voyageai/ |

| Atlas Vector Search | mongodb.com/docs/atlas/atlas-vector-search/ |

Author: Michael Lynn (Principal Staff Developer Advocate, MongoDB) · License: MIT

Community tool — not an official MongoDB or Voyage AI product.